About the Customer

Infopercept is a global, platform-led Managed Security Services Provider (MSSP) that delivers advanced cybersecurity solutions across IT, Cloud, OT, and IoT environments. Its offerings span SIEM, SOAR, EDR, Threat Intelligence, Vulnerability Management, Digital Forensics, and Red/Blue/Purple Team operations. As Infopercept rapidly expanded its security platform capabilities, maintaining accurate, up-to-date internal knowledge became mission-critical.

Business Challenge

Infopercept’s security platform and service offerings were evolving continuously, leading to rapid growth in internal documentation, playbooks, and standard operating procedures. This created several challenges:

- A large and constantly expanding knowledge base made information discovery time-consuming

- Critical security insights risked being overlooked due to manual search limitations

- Subject matter experts often avoided consulting documentation because of poor retrieval efficiency

- Maintaining accuracy and relevance across documents required significant manual effort

- Delays in information access directly impacted operational efficiency and response quality

Infopercept required a secure, scalable, and AI-driven knowledge retrieval system that could improve accuracy while significantly reducing time to insight.

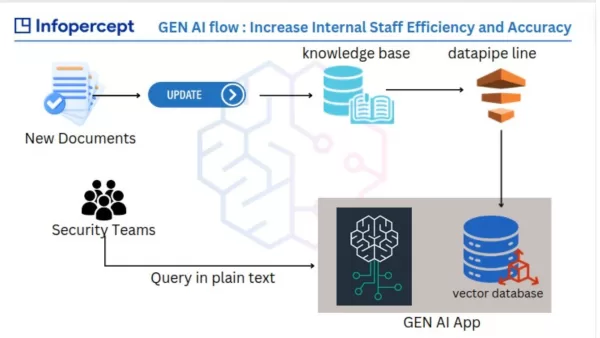

Solution Overview

Electromech Cloudtech designed and implemented a Generative AI–powered knowledge intelligence platform using AWS native AI and analytics services. The solution leveraged a Retrieval Augmented Generation (RAG) architecture to deliver accurate, context-aware responses while ensuring data security and compliance.

The system automatically ingests documentation, enriches it using AI services, and enables employees to query the knowledge base using natural language.

AWS AI Services Implemented

The solution was built using the following AWS AI and ML services:

- Amazon Bedrock – Foundation model inference (Claude) for secure, enterprise-grade generative AI

- Amazon SageMaker – Text embedding generation and vector model hosting

- Amazon Comprehend – Entity recognition, key phrase extraction, and document classification

- Amazon Textract – Text extraction from structured and unstructured documents

- Amazon Aurora PostgreSQL (pgvector) – Vector similarity search for semantic retrieval

Supporting services included AWS Lambda, Amazon API Gateway, Amazon S3, Amazon DynamoDB, Amazon Cognito, AWS WAF, IAM, Amazon Athena, and CloudWatch.

Technical Architecture Overview

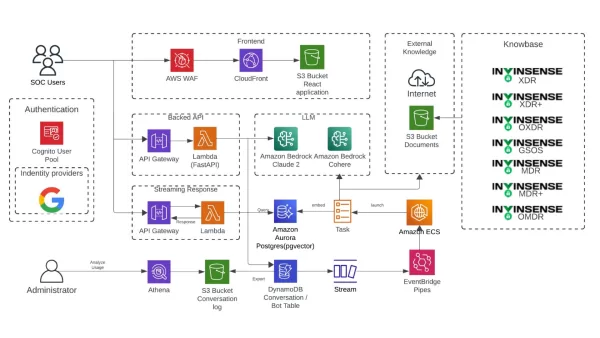

High-Level Architecture Components

- Frontend: React application hosted on Amazon S3 and delivered via Amazon CloudFront

- Authentication: Amazon Cognito with role-based access control

- API Layer: Amazon API Gateway invoking AWS Lambda functions

- AI Orchestration: AWS Lambda for ingestion, enrichment, retrieval, and generation workflows

- Data Storage:

- Amazon S3 for document storage

- Amazon Aurora PostgreSQL (pgvector) for vector embeddings

- Amazon DynamoDB for conversation history and session tracking

Detailed Data Flow Architecture

1. Document Ingestion

- Security documentation, SOPs, and platform manuals are uploaded to Amazon S3

- Amazon Textract extracts text from PDFs, DOCX files, and scanned documents

2. Content Enrichment and Classification

- Extracted text is processed using Amazon Comprehend to:

- Identify named entities

- Extract key security terms

- Classify documents by domain and risk relevance

- Enriched metadata is appended to each document segment

3. Embedding Generation and Indexing

- Cleaned and enriched text is passed to Amazon SageMaker endpoints

- SageMaker generates vector embeddings for semantic search

- Embeddings are stored in Amazon Aurora PostgreSQL using pgvector

4. Query Processing and Retrieval (RAG Pattern)

- Users submit natural language queries via the web interface

- Query embeddings are generated using Amazon SageMaker

- Relevant document chunks are retrieved using vector similarity search

- Retrieved context is dynamically injected into prompts

5. Generative Response Generation

- Amazon Bedrock (Claude foundation model) generates accurate, contextual responses

- Model invocation occurs via private AWS endpoints

- Customer data is not used to train or fine-tune foundation models

6. Session Management and Analytics

- User interactions and conversation logs are stored in Amazon DynamoDB

- Logs and usage metrics are analyzed using Amazon Athena

- System performance and usage monitored through Amazon CloudWatch

AI Model Deployment Details

- Foundation Models:

- Deployed via Amazon Bedrock with no infrastructure management

- Fully isolated within the customer’s AWS account

- Embedding Models:

- Hosted on Amazon SageMaker with autoscaling endpoints

- Used exclusively for embedding generation, not model training

- Security Controls:

- IAM-based access to AI services

- AWS WAF protects public endpoints

- End-to-end encryption in transit and at rest

Service Integration Approach

| Layer | Integration Method |

|---|---|

| Frontend | HTTPS via CloudFront |

| API Layer | REST APIs using Amazon API Gateway |

| AI Workflows | Event-driven AWS Lambda |

| Vector Search | pgvector similarity queries |

| LLM Access | Amazon Bedrock SDK |

| Authentication | Amazon Cognito + IAM |

| Monitoring | Amazon CloudWatch |

Business Outcomes and Benefits

- Reduced knowledge retrieval time from minutes to seconds

- Improved documentation accuracy and consistency

- Increased internal adoption of knowledge resources

- Achieved 2x–10x improvement in employee efficiency across teams

- Enabled secure enterprise GenAI adoption without data exposure

Security and Responsible AI Practices

- All AI workloads run within Infopercept’s AWS account

- No customer data is used to train foundation models

- Strict IAM policies enforce least-privilege access

- Full auditability through centralized logging and monitoring

Conclusion

By leveraging AWS Generative AI and ML services, Infopercept transformed its internal knowledge management into a secure, scalable, and intelligent system. The solution demonstrates a real-world enterprise deployment of AWS AI services, aligning strongly with AWS AI Competency requirements while delivering measurable operational impact.